casesandybridge

sandybridge 时间:2021-03-27 阅读:()

PLQCDlibraryforLatticeQCDonmulti-coremachinesA.

Abdel-Rehim,aC.

Alexandrou,a,bN.

Anastopoulos,cG.

Koutsou,aI.

LiabotisdandN.

PapadopouloucaTheCyprusInstitute,CaSToRC,20KonstantinouKavaStreet,2121Aglantzia,Nicosia,CyprusbDepartmentofPhysics,UniversityofCyprus,P.

O.

Box20537,1678Nicosia,CypruscComputingSystemsLaboratory,SchoolofElectricalandComputerEngineering,NationalTechnicalUniversityofAthens,ZografouCampus,15773Zografou,Athens,GreecedGreekResearchandTechnologyNetwork,56MesogionAv.

,11527,Athens,GreeceE-mail:a.

abdel-rehim@cyi.

ac.

cy,c.

alexandrou@cyi.

ac.

cy,g.

koutsou@cyi.

ac.

cy,anastop@cslab.

ece.

ntua.

gr,iliaboti@grnet.

gr,nikela@cslab.

ece.

ntua.

grPLQCDisastand–alonesoftwarelibrarydevelopedunderPRACEforlatticeQCD.

Itpro-videsanimplementationoftheDiracoperatorforWilsontypefermionsandfewefcientlin-earsolvers.

Thelibraryisoptimizedformulti-coremachinesusingahybridparallelizationwithOpenMP+MPI.

ThemainobjectivesofthelibraryistoprovideascalableimplementationoftheDiracoperatorforefcientcomputationofthequarkpropagator.

Inthiscontribution,adescrip-tionofthePLQCDlibraryisgiventogetherwithsomebenchmarkresults.

31stInternationalSymposiumonLatticeFieldTheoryLATTICE2013July29August3,2013Mainz,GermanySpeaker.

cCopyrightownedbytheauthor(s)underthetermsoftheCreativeCommonsAttribution-NonCommercial-ShareAlikeLicence.

http://pos.

sissa.

it/arXiv:1405.

0700v1[hep-lat]4May2014PLQCDA.

Abdel-Rehim1.

IntroductionComputerhardwareforcommodityclustersaswellassupercomputershasevolvedtremen-douslyinthelastfewyears.

Nowadaysatypicalcomputenodehasbetween16and64coresandpossiblyanacceleratorsuchasaGraphicsProcessingUnit(GPU)orlatelyanIntelManyIntegratedCore(MIC)card.

Thistrendofpackingmanylow-poweredbutmassivelyparallelpro-cessingunitsisexpectedtocontinueassupercomputingtechnologypursuestheExascaleregime.

Thecurrenttechnologytrendsindicatethatbandwidthtomainmemorywillcontinuetolagbehindcomputationalpower,whichrequiresarethinkingofthedesignoflatticeQCDcodessuchthattheycanefcientlyrunonsucharchitectures.

Takingthisintoaccount,PRACE[1]allocatedresourcesforcommunitycodescalingactivitiesinmanycomputationallyintensiveareasincludinglatticeQCD.

TheworkpresentedherewasdevelopedunderPRACEfocusingonscalingcodesformulti-coremachines.

Theworkwepresentdealswithcommunitycodes,andmorespecicallyoncertaincomputationallyintensivekernelsinthesecodes,inordertoimprovetheirscalingandperformanceformulti-corearchitectures.

WehavecarriedoutoptimizationworkonthetmLQCD[2,3]codeandhavedevelopedanewhybridMPI/OpenMPlibrary(PLQCD)withoptimizedimplementationsoftheWilsonDirackernelandaselectedsetoflinearsolvers.

OurpartnersinthisprojecthavealsoperformedoptimizationworkfortheMolecularDynamicsintegratorsusedinHybridMonteCarlocodes,andalsoforLandaugaugexing.

ThiswasdonewithintheChromasoftwaresuite[4]andwillnotbediscussedhere(See[5]formoreinformation).

Manyothercommunitycodesofcourseexistbutwerenotconsideredinthiswork(See[6]foranoverview).

Inwhatfollows,wewillrstpresenttheworkcarriedoutforthecaseofPLQCD,whereweim-plementedtheWilsonDiracoperatorandassociatedlinearalgebrafunctionsusingMPI+OpenMP.

Inadditiontousingthishybridapproachforparallelism,wealsoimplementadditionaloptimiza-tionssuchasoverlappingcommunicationandcomputation,usingcompilerintrinsicsforvector-izationaswellasimplementingthenewAdvancedVectorInstructions[7](AVXforIntelorQPXforBlue/GeneQ)thatbecamerecentlyavailableinnewgenerationofprocessorssuchastheIntelSandy-Bridge.

TheworkdoneforthecaseofthetmLQCDpackagewillthenbepresented,whereweimplementedsomenewefcientlinearsolvers,inparticularthosebasedondeationsuchastheEigCGsolver[8],forwhichwewillgivesomebenchmarkresults.

2.

DiracoperatoroptimizationsAkeycomponentofthelatticeDiracoperatoristhehoppingpartgivenbyEq.

2.

1.

ψ(x)=3∑=0[U(x)(1γ)φ(x+e)+U(xe)(1+γ)φ(xe)],(2.

1)where,U(x)isthegaugelinkmatrixinthedirectionatsitex,γaretheDiracmatricesandeisaunitvectorinthedirection.

φandψaretheinputandoutputspinorsrespectively.

Equation2.

1canbere-writtenintermsoftwoauxiliaryeldsθ+(x)=(1γ)φ(x)andθ(x)=U(x)(1+γ)φ(x)asψ(x)=3∑=0[U(x)θ+(x+e)+θ(xe)].

(2.

2)2PLQCDA.

Abdel-RehimBecauseofthestructureoftheγmatrices,onlytheuppertwospincomponentsofθ±needtobecomputedbecausethelowertwospincomponentsarerelatedtotheupperones[9].

Inthefollowingwedescribesomeoftheoptimizationsperformedforthehoppingmatrix.

2.

1HybridparallelizationwithMPIandOpenMPOpenMPprovidesasimpleapproachformulti-threadingsinceitisimplementedascompilerdirectives.

Onecanincrementallyaddmulti-threadingtothecodeandalsousethesamecodewithmulti-threadingturnedonandoff.

Sincethemaincomponentinthehoppingmatrix(Diracoperator)isalarge"forloop"overlatticesites,itisnaturaltousethefor-loopparallelconstructofopenMP.

TheperformanceofthehybridcodeisthentestedagainstthepureMPIversion.

Weperformaweakscalingtestbyxingthelocalvolumepercore(orthread)andincreasethenumberofMPIprocesses.

ThetestwasdoneontheHoppermachineatNERSCwhichisaCrayXE6[10].

Eachcomputenodehas2twelve-coreAMD'MagnyCours'at2.

1-GHzsuchthateach6coressharethesamecache.

WendperformancefortheHybridversionismaximumwhenassigningatmost6threadsperMPIprocesssuchthatthese6OMPthreadssharethesameL3cache.

InFig.

1weshowtheperformanceofthepureMPIandtheMPI+openMPwith6threadsperMPIprocessforatotalnumberofcoresupto49,152cores.

FromtheseresultswerstnoticethatusingOpenMPleadstoaslightdegradationinperformanceascomparedtothepureMPIcase.

However,asweseeinthecasewithlocalvolumeof124,thehybridapproachperformsbetteraswegotoalargenumberofcores.

Similarbehaviorhasbeenalsoobservedforothercodesfromdifferentcomputationalsciences(seethecasestudiesonHopper[11]).

Figure1:WeakscalingtestforthehoppingmatrixonaCrayXE6machinewithlocallatticevolumepercore84(left)and124(right).

2.

2OverlappingcommunicationwithcomputationTypicallyinlatticecodesonerstcomputestheauxiliaryhalf-spinoreldsθ±asgiveninEquation(2.

2)andthencommunicatestheirvaluesontheboundariesbetweenneighboringpro-cessesinthe+anddirections.

Inablockingcommunicationscheme,computationhaltsuntilcommunicationoftheboundariescompletes.

Analternativeapproachistooverlapcommunica-tionswithcomputationsbydividingthelatticesitesintobulksites,forwhichnearestneighborsare3PLQCDA.

Abdel-Rehimavailablelocally,andboundarysites,forwhichthenearestneighborsarelocatedonneighboringprocesses,andthereforecanonlybeoperateduponaftercommunication.

Theorderofoperationsforcomputingtheresultψisthendoneasfollows:Computeθ+andbegincommunicatingthemtotheneighboringMPIprocessinthedirection.

ComputeθandbegincommunicatingthemtotheneighboringMPIprocessinthe+direction.

Computetheresultψ(x)onthebulksiteswhiletheneighborsarebeingcommunicated.

Waitforthecommunicationsinthedirectionstonish,thencomputethecontributions∑3=0[U(x)θ+(x+e)]totheresultontheboundarysites.

Waitforthecommunicationsinthe+directionstonish,thencomputethecontributions∑3=0[θ(xe)]ontheboundarysites.

Communicationisdoneusingnon-blockingMPIfunctionsMPI_Isend,MPI_IrecvandMPI_Wait.

Apossibledrawbackofthisapproachisthatonewillaccessψ(x)andU(x)inanunorderedfash-iondifferentfromtheorderitisstoredinmemory.

This,however,canbecircumventedpartiallybyusinghintsinthecodeforprefetching.

Wehavetestedtheeffectofprefetchingincaseofsequen-tialandrandomaccessofspinorandlinkelds.

Thetestwasdoneusingaseparatebenchmarkkernelcodewhichisolatesthelink-spinormultiplication.

AscanbeseeninFig.

3,prefetchingbecomesimportantforalargenumberofsites,i.

e.

whendata(spinorsandlinks)cannottinthecachememory,whichisatypicalsituationforlatticecalculations.

Itisalsonotedthataccessingthesitesrandomlyreducestheperformance,aswouldbeexpected.

Inthiscaseonecanimprovethesituationbydeningapointerarray,e.

g.

forthespinorsψ(i)=&ψ(x[i])wherex[i]isthesitetobeaccessedatstepiintheloopsuchasweshowinpseudo-codeinFig.

2.

Thesepointerscanbedenedapriori.

ThisimprovesthepredictiveabilityofthehardwareasisshowninFig.

3wherewecomparethedifferentprefetchingandaddressingschemes.

Sequentialaccessfor(i=0;iSandyBridgeprocessorsandlaterbyAMDintheirBull-dozerprocessor.

The16XMMregistersofSSE3arenow256-bitwideandknownasYMMregisters.

AVX-capableoatingpointunitsareabletoperformon4doubleprecisionoatingpointnumbersor8singleprecision.

Implementingtheseextensionsinthevectorizedpartsoflatticecodeshasthepotentialofprovidingagainofuptoafactor2inanidealsituation,althoughinprac-ticethisdependsonthelayoutoflatticedata.

Weprovidedanimplementationoftheseextensionsusinginlineintrinsics.

InthisimplementationasingleSU(3)matrixmultipliestwoSU(3)vectorssimultaneously.

Againofaboutafactorof1.

5isachievedforthehoppingmatrixinthetmLQCDcodeindoubleprecisionasshowninFig.

(4).

Forillustration,acodesnippetformultiplyingtwocomplexnumbersbytwocomplexnumbersusingAVXisshowninFig.

(5).

3.

EigCGsolverforTwisted-MassfermionsTwisted-MassfermionsoffertheadvantageofautomaticO(a)improvementwhentunedtomaximaltwist[12].

Withinthisdevelopmentworkwehaveaddedanincrementaldeationalgo-rithm,knownasEigCG,tothetmLQCDpackage.

Numericaltestsshowedaconsiderablespeed-upofthesolutionofthelinearsystemsonthelargestvolumessimulatedbytheEuropeanTwistedMassCollaboration(ETMC).

Forillustration,weshowinFig.

(6)thetimetosolutionwithEigCGonaTwisted-Masscongurationwith2+1+1dynamicalavorswithlatticesize483*96atβ=2.

1,andpionmass≈230MeV.

Inthiscasethetotalnumberofeigenvectorsdeatedwas300whichwasbuiltincrementallybycomputing10eigenvectorsduringthesolutionoftherst30right-handsidesusingasearchsubspaceofsize60.

Allsystemsaresolvedindoubleprecisiontorelativetoleranceof108.

5PLQCDA.

Abdel-RehimFigure4:Comparingtheperformanceofthehop-pingmatrixoftmLQCDusingSSE3andAVXindoubleprecisiononanIntelSandyBridgeprocessor.

#include/*t0:a+b*I,e+f*Iandt1:c+d*I,g+h*I*return:(ac-bd)+(ad+bc)*I,*(eg-fh)+(eh+fg)*I*/staticinline__m256dcomplex_mul_regs_256(__m256dt0,__m256dt1){__m256dt2;t2=t1;t1=_mm256_unpacklo_pd(t1,t1);t2=_mm256_unpackhi_pd(t2,t2);t1=_mm256_mul_pd(t1,t0);t2=_mm256_mul_pd(t2,t0);t2=_mm256_shuffle_pd(t2,t2,5);t1=_mm256_addsub_pd(t1,t2);returnt1;}Figure5:MultiplyingtwocomplexnumbersbytwocomplexnumberoftypedoubleusingAVXin-structions.

Figure6:Solutiontimeperprocessfortherst35right-handsidesusingIncrementalEigCGascomparedtoCGonaTwisted-Masscongurationwithlatticesize483*96atβ=2.

1,andpionmass≈230MeV.

4.

ConclusionsandSummaryWehavecarriedoutdevelopmenteffortforafewselectedkernelsusedinlatticeQCD.

TherstoftheseeffortsincludedthedevelopmentofahybridMPI/OpenMPlibrarywhichincludesparallelizedkernelsfortheWilsonDiracoperatorandfewassociatedsolvers.

Anumberofparal-lelizationstrategieshavebeeninvestigated,suchasforoverlappingcommunicationwithcomputa-tions.

ThecodehasbeenshowntoscalefairlywellontheCrayXE6.

Intermsofsingleprocessperformance,wecarriedoutinitialvectorizationeffortsforAVXwhereweseeanimprovementof1.

5comparedtotheideal2.

Inadditionwehaveinvestigatedseveraldata-orderingandassociatedprefetchingstrategies.

ForthecaseoftmLQCD,themainsoftwarecodeoftheETMCcollaboration,wehaveimple-6PLQCDA.

Abdel-Rehimmentedanefcientlinearsolverwhichincrementallydeatedthetwisted-massDiracoperatortogiveaspeed-upofabout3timeswhenenoughright-hand-sidesarerequired.

Thisisalreadyinuseinproductionprojects,suchasinRefs.

[14]and[13].

Allcodesarepubliclyavailable.

PLQCDisavailablethroughtheHPCFORGEwebsiteattheSwissNationalSupercomputingCentre(CSCS)wheremoreinformationisavailablewithinthecodedocumentation.

OurEigCGimplementationintmLQCDisavailableviagit-hub.

AcknowledgementsThistalkwasapartofacodingsessionsponsoredpartiallybythePRACE-2IPproject,aspartofthe"CommunityCodesDevelopment"WorkPackage8.

PRACE-2IPisa7thFrameworkEUfundedproject(http://www.

prace-ri.

eu/,grantagreementnumber:RI-283493).

Wewouldliketothanktheorganizersofthe2013Latticemeetingfortheirstrongsupporttomakethecodingsessionasuccessandprovideallorganizationsupport.

WewouldliketothankC.

Urbach,A.

Deuzmann,B.

Kostrzewa,HubertSimma,S.

Krieg,andL.

Scorzatoforverystimulatingdiscussionsduringthedevelopmentofthisproject.

WeacknowledgethecomputingresourcesfromTier-0machinesofPRACEincludingJUQUEENandCuriemachinesaswellastheTodimachineatCSCS.

WealsoacknowledgethecomputingsupportfromNERSCandtheHoppermachine.

References[1]http://www.

prace-ri.

eu/.

[2]K.

JansenandC.

Urbach,Comput.

Phys.

Commun.

180,2717(2009),[arXiv:0905.

3331].

[3]ETMCollaboration,https://github.

com/etmc/tmLQCD.

[4]http://usqcd.

jlab.

org/usqcd-docs/chroma/.

[5]SeethepublicdeliverableD8.

3onthePRACEwebsiteunderPRACE-2IP.

[6]A.

Deuzeman,PoS(LATTICE2013).

[7]SeetheIntelDevelopermanual.

[8]A.

StathopoulosandK.

Orginos,Computinganddeatingeigenvalueswhilesolvingmultipleright-handsidelinearsystemswithanapplicationtoquantumchromodynamics,SIAMJ.

Sci.

Comput.

2010;32(1):439–462,[arXiv:0707.

0131].

[9]SeeforexamplethedocumentationoftheDDHMCcodebyM.

L¨uscher.

[10]TheHopperCrayXE6machineatNERSC.

[11]SeedocumentationforcombiningMPIandopenMPontheNERSCwebsite.

[12]R.

Frezzottietal.

[AlphaCollaboration],LatticeQCDwithachirallytwistedmassterm,JHEP0108,058(2001)[hep-lat/0101001].

[13]C.

Alexandrou,M.

Constantinou,S.

Dinter,V.

Drach,K.

Hadjiyiannakou,K.

Jansen,G.

KoutsouandA.

Vaquero,arXiv:1309.

7768[hep-lat].

[14]A.

Abdel-Rehim,C.

Alexandrou,M.

Constantinou,V.

Drach,K.

Hadjiyiannakou,K.

Jansen,G.

KoutsouandA.

Vaquero,arXiv:1310.

6339[hep-lat].

7

Abdel-Rehim,aC.

Alexandrou,a,bN.

Anastopoulos,cG.

Koutsou,aI.

LiabotisdandN.

PapadopouloucaTheCyprusInstitute,CaSToRC,20KonstantinouKavaStreet,2121Aglantzia,Nicosia,CyprusbDepartmentofPhysics,UniversityofCyprus,P.

O.

Box20537,1678Nicosia,CypruscComputingSystemsLaboratory,SchoolofElectricalandComputerEngineering,NationalTechnicalUniversityofAthens,ZografouCampus,15773Zografou,Athens,GreecedGreekResearchandTechnologyNetwork,56MesogionAv.

,11527,Athens,GreeceE-mail:a.

abdel-rehim@cyi.

ac.

cy,c.

alexandrou@cyi.

ac.

cy,g.

koutsou@cyi.

ac.

cy,anastop@cslab.

ece.

ntua.

gr,iliaboti@grnet.

gr,nikela@cslab.

ece.

ntua.

grPLQCDisastand–alonesoftwarelibrarydevelopedunderPRACEforlatticeQCD.

Itpro-videsanimplementationoftheDiracoperatorforWilsontypefermionsandfewefcientlin-earsolvers.

Thelibraryisoptimizedformulti-coremachinesusingahybridparallelizationwithOpenMP+MPI.

ThemainobjectivesofthelibraryistoprovideascalableimplementationoftheDiracoperatorforefcientcomputationofthequarkpropagator.

Inthiscontribution,adescrip-tionofthePLQCDlibraryisgiventogetherwithsomebenchmarkresults.

31stInternationalSymposiumonLatticeFieldTheoryLATTICE2013July29August3,2013Mainz,GermanySpeaker.

cCopyrightownedbytheauthor(s)underthetermsoftheCreativeCommonsAttribution-NonCommercial-ShareAlikeLicence.

http://pos.

sissa.

it/arXiv:1405.

0700v1[hep-lat]4May2014PLQCDA.

Abdel-Rehim1.

IntroductionComputerhardwareforcommodityclustersaswellassupercomputershasevolvedtremen-douslyinthelastfewyears.

Nowadaysatypicalcomputenodehasbetween16and64coresandpossiblyanacceleratorsuchasaGraphicsProcessingUnit(GPU)orlatelyanIntelManyIntegratedCore(MIC)card.

Thistrendofpackingmanylow-poweredbutmassivelyparallelpro-cessingunitsisexpectedtocontinueassupercomputingtechnologypursuestheExascaleregime.

Thecurrenttechnologytrendsindicatethatbandwidthtomainmemorywillcontinuetolagbehindcomputationalpower,whichrequiresarethinkingofthedesignoflatticeQCDcodessuchthattheycanefcientlyrunonsucharchitectures.

Takingthisintoaccount,PRACE[1]allocatedresourcesforcommunitycodescalingactivitiesinmanycomputationallyintensiveareasincludinglatticeQCD.

TheworkpresentedherewasdevelopedunderPRACEfocusingonscalingcodesformulti-coremachines.

Theworkwepresentdealswithcommunitycodes,andmorespecicallyoncertaincomputationallyintensivekernelsinthesecodes,inordertoimprovetheirscalingandperformanceformulti-corearchitectures.

WehavecarriedoutoptimizationworkonthetmLQCD[2,3]codeandhavedevelopedanewhybridMPI/OpenMPlibrary(PLQCD)withoptimizedimplementationsoftheWilsonDirackernelandaselectedsetoflinearsolvers.

OurpartnersinthisprojecthavealsoperformedoptimizationworkfortheMolecularDynamicsintegratorsusedinHybridMonteCarlocodes,andalsoforLandaugaugexing.

ThiswasdonewithintheChromasoftwaresuite[4]andwillnotbediscussedhere(See[5]formoreinformation).

Manyothercommunitycodesofcourseexistbutwerenotconsideredinthiswork(See[6]foranoverview).

Inwhatfollows,wewillrstpresenttheworkcarriedoutforthecaseofPLQCD,whereweim-plementedtheWilsonDiracoperatorandassociatedlinearalgebrafunctionsusingMPI+OpenMP.

Inadditiontousingthishybridapproachforparallelism,wealsoimplementadditionaloptimiza-tionssuchasoverlappingcommunicationandcomputation,usingcompilerintrinsicsforvector-izationaswellasimplementingthenewAdvancedVectorInstructions[7](AVXforIntelorQPXforBlue/GeneQ)thatbecamerecentlyavailableinnewgenerationofprocessorssuchastheIntelSandy-Bridge.

TheworkdoneforthecaseofthetmLQCDpackagewillthenbepresented,whereweimplementedsomenewefcientlinearsolvers,inparticularthosebasedondeationsuchastheEigCGsolver[8],forwhichwewillgivesomebenchmarkresults.

2.

DiracoperatoroptimizationsAkeycomponentofthelatticeDiracoperatoristhehoppingpartgivenbyEq.

2.

1.

ψ(x)=3∑=0[U(x)(1γ)φ(x+e)+U(xe)(1+γ)φ(xe)],(2.

1)where,U(x)isthegaugelinkmatrixinthedirectionatsitex,γaretheDiracmatricesandeisaunitvectorinthedirection.

φandψaretheinputandoutputspinorsrespectively.

Equation2.

1canbere-writtenintermsoftwoauxiliaryeldsθ+(x)=(1γ)φ(x)andθ(x)=U(x)(1+γ)φ(x)asψ(x)=3∑=0[U(x)θ+(x+e)+θ(xe)].

(2.

2)2PLQCDA.

Abdel-RehimBecauseofthestructureoftheγmatrices,onlytheuppertwospincomponentsofθ±needtobecomputedbecausethelowertwospincomponentsarerelatedtotheupperones[9].

Inthefollowingwedescribesomeoftheoptimizationsperformedforthehoppingmatrix.

2.

1HybridparallelizationwithMPIandOpenMPOpenMPprovidesasimpleapproachformulti-threadingsinceitisimplementedascompilerdirectives.

Onecanincrementallyaddmulti-threadingtothecodeandalsousethesamecodewithmulti-threadingturnedonandoff.

Sincethemaincomponentinthehoppingmatrix(Diracoperator)isalarge"forloop"overlatticesites,itisnaturaltousethefor-loopparallelconstructofopenMP.

TheperformanceofthehybridcodeisthentestedagainstthepureMPIversion.

Weperformaweakscalingtestbyxingthelocalvolumepercore(orthread)andincreasethenumberofMPIprocesses.

ThetestwasdoneontheHoppermachineatNERSCwhichisaCrayXE6[10].

Eachcomputenodehas2twelve-coreAMD'MagnyCours'at2.

1-GHzsuchthateach6coressharethesamecache.

WendperformancefortheHybridversionismaximumwhenassigningatmost6threadsperMPIprocesssuchthatthese6OMPthreadssharethesameL3cache.

InFig.

1weshowtheperformanceofthepureMPIandtheMPI+openMPwith6threadsperMPIprocessforatotalnumberofcoresupto49,152cores.

FromtheseresultswerstnoticethatusingOpenMPleadstoaslightdegradationinperformanceascomparedtothepureMPIcase.

However,asweseeinthecasewithlocalvolumeof124,thehybridapproachperformsbetteraswegotoalargenumberofcores.

Similarbehaviorhasbeenalsoobservedforothercodesfromdifferentcomputationalsciences(seethecasestudiesonHopper[11]).

Figure1:WeakscalingtestforthehoppingmatrixonaCrayXE6machinewithlocallatticevolumepercore84(left)and124(right).

2.

2OverlappingcommunicationwithcomputationTypicallyinlatticecodesonerstcomputestheauxiliaryhalf-spinoreldsθ±asgiveninEquation(2.

2)andthencommunicatestheirvaluesontheboundariesbetweenneighboringpro-cessesinthe+anddirections.

Inablockingcommunicationscheme,computationhaltsuntilcommunicationoftheboundariescompletes.

Analternativeapproachistooverlapcommunica-tionswithcomputationsbydividingthelatticesitesintobulksites,forwhichnearestneighborsare3PLQCDA.

Abdel-Rehimavailablelocally,andboundarysites,forwhichthenearestneighborsarelocatedonneighboringprocesses,andthereforecanonlybeoperateduponaftercommunication.

Theorderofoperationsforcomputingtheresultψisthendoneasfollows:Computeθ+andbegincommunicatingthemtotheneighboringMPIprocessinthedirection.

ComputeθandbegincommunicatingthemtotheneighboringMPIprocessinthe+direction.

Computetheresultψ(x)onthebulksiteswhiletheneighborsarebeingcommunicated.

Waitforthecommunicationsinthedirectionstonish,thencomputethecontributions∑3=0[U(x)θ+(x+e)]totheresultontheboundarysites.

Waitforthecommunicationsinthe+directionstonish,thencomputethecontributions∑3=0[θ(xe)]ontheboundarysites.

Communicationisdoneusingnon-blockingMPIfunctionsMPI_Isend,MPI_IrecvandMPI_Wait.

Apossibledrawbackofthisapproachisthatonewillaccessψ(x)andU(x)inanunorderedfash-iondifferentfromtheorderitisstoredinmemory.

This,however,canbecircumventedpartiallybyusinghintsinthecodeforprefetching.

Wehavetestedtheeffectofprefetchingincaseofsequen-tialandrandomaccessofspinorandlinkelds.

Thetestwasdoneusingaseparatebenchmarkkernelcodewhichisolatesthelink-spinormultiplication.

AscanbeseeninFig.

3,prefetchingbecomesimportantforalargenumberofsites,i.

e.

whendata(spinorsandlinks)cannottinthecachememory,whichisatypicalsituationforlatticecalculations.

Itisalsonotedthataccessingthesitesrandomlyreducestheperformance,aswouldbeexpected.

Inthiscaseonecanimprovethesituationbydeningapointerarray,e.

g.

forthespinorsψ(i)=&ψ(x[i])wherex[i]isthesitetobeaccessedatstepiintheloopsuchasweshowinpseudo-codeinFig.

2.

Thesepointerscanbedenedapriori.

ThisimprovesthepredictiveabilityofthehardwareasisshowninFig.

3wherewecomparethedifferentprefetchingandaddressingschemes.

Sequentialaccessfor(i=0;iSandyBridgeprocessorsandlaterbyAMDintheirBull-dozerprocessor.

The16XMMregistersofSSE3arenow256-bitwideandknownasYMMregisters.

AVX-capableoatingpointunitsareabletoperformon4doubleprecisionoatingpointnumbersor8singleprecision.

Implementingtheseextensionsinthevectorizedpartsoflatticecodeshasthepotentialofprovidingagainofuptoafactor2inanidealsituation,althoughinprac-ticethisdependsonthelayoutoflatticedata.

Weprovidedanimplementationoftheseextensionsusinginlineintrinsics.

InthisimplementationasingleSU(3)matrixmultipliestwoSU(3)vectorssimultaneously.

Againofaboutafactorof1.

5isachievedforthehoppingmatrixinthetmLQCDcodeindoubleprecisionasshowninFig.

(4).

Forillustration,acodesnippetformultiplyingtwocomplexnumbersbytwocomplexnumbersusingAVXisshowninFig.

(5).

3.

EigCGsolverforTwisted-MassfermionsTwisted-MassfermionsoffertheadvantageofautomaticO(a)improvementwhentunedtomaximaltwist[12].

Withinthisdevelopmentworkwehaveaddedanincrementaldeationalgo-rithm,knownasEigCG,tothetmLQCDpackage.

Numericaltestsshowedaconsiderablespeed-upofthesolutionofthelinearsystemsonthelargestvolumessimulatedbytheEuropeanTwistedMassCollaboration(ETMC).

Forillustration,weshowinFig.

(6)thetimetosolutionwithEigCGonaTwisted-Masscongurationwith2+1+1dynamicalavorswithlatticesize483*96atβ=2.

1,andpionmass≈230MeV.

Inthiscasethetotalnumberofeigenvectorsdeatedwas300whichwasbuiltincrementallybycomputing10eigenvectorsduringthesolutionoftherst30right-handsidesusingasearchsubspaceofsize60.

Allsystemsaresolvedindoubleprecisiontorelativetoleranceof108.

5PLQCDA.

Abdel-RehimFigure4:Comparingtheperformanceofthehop-pingmatrixoftmLQCDusingSSE3andAVXindoubleprecisiononanIntelSandyBridgeprocessor.

#include/*t0:a+b*I,e+f*Iandt1:c+d*I,g+h*I*return:(ac-bd)+(ad+bc)*I,*(eg-fh)+(eh+fg)*I*/staticinline__m256dcomplex_mul_regs_256(__m256dt0,__m256dt1){__m256dt2;t2=t1;t1=_mm256_unpacklo_pd(t1,t1);t2=_mm256_unpackhi_pd(t2,t2);t1=_mm256_mul_pd(t1,t0);t2=_mm256_mul_pd(t2,t0);t2=_mm256_shuffle_pd(t2,t2,5);t1=_mm256_addsub_pd(t1,t2);returnt1;}Figure5:MultiplyingtwocomplexnumbersbytwocomplexnumberoftypedoubleusingAVXin-structions.

Figure6:Solutiontimeperprocessfortherst35right-handsidesusingIncrementalEigCGascomparedtoCGonaTwisted-Masscongurationwithlatticesize483*96atβ=2.

1,andpionmass≈230MeV.

4.

ConclusionsandSummaryWehavecarriedoutdevelopmenteffortforafewselectedkernelsusedinlatticeQCD.

TherstoftheseeffortsincludedthedevelopmentofahybridMPI/OpenMPlibrarywhichincludesparallelizedkernelsfortheWilsonDiracoperatorandfewassociatedsolvers.

Anumberofparal-lelizationstrategieshavebeeninvestigated,suchasforoverlappingcommunicationwithcomputa-tions.

ThecodehasbeenshowntoscalefairlywellontheCrayXE6.

Intermsofsingleprocessperformance,wecarriedoutinitialvectorizationeffortsforAVXwhereweseeanimprovementof1.

5comparedtotheideal2.

Inadditionwehaveinvestigatedseveraldata-orderingandassociatedprefetchingstrategies.

ForthecaseoftmLQCD,themainsoftwarecodeoftheETMCcollaboration,wehaveimple-6PLQCDA.

Abdel-Rehimmentedanefcientlinearsolverwhichincrementallydeatedthetwisted-massDiracoperatortogiveaspeed-upofabout3timeswhenenoughright-hand-sidesarerequired.

Thisisalreadyinuseinproductionprojects,suchasinRefs.

[14]and[13].

Allcodesarepubliclyavailable.

PLQCDisavailablethroughtheHPCFORGEwebsiteattheSwissNationalSupercomputingCentre(CSCS)wheremoreinformationisavailablewithinthecodedocumentation.

OurEigCGimplementationintmLQCDisavailableviagit-hub.

AcknowledgementsThistalkwasapartofacodingsessionsponsoredpartiallybythePRACE-2IPproject,aspartofthe"CommunityCodesDevelopment"WorkPackage8.

PRACE-2IPisa7thFrameworkEUfundedproject(http://www.

prace-ri.

eu/,grantagreementnumber:RI-283493).

Wewouldliketothanktheorganizersofthe2013Latticemeetingfortheirstrongsupporttomakethecodingsessionasuccessandprovideallorganizationsupport.

WewouldliketothankC.

Urbach,A.

Deuzmann,B.

Kostrzewa,HubertSimma,S.

Krieg,andL.

Scorzatoforverystimulatingdiscussionsduringthedevelopmentofthisproject.

WeacknowledgethecomputingresourcesfromTier-0machinesofPRACEincludingJUQUEENandCuriemachinesaswellastheTodimachineatCSCS.

WealsoacknowledgethecomputingsupportfromNERSCandtheHoppermachine.

References[1]http://www.

prace-ri.

eu/.

[2]K.

JansenandC.

Urbach,Comput.

Phys.

Commun.

180,2717(2009),[arXiv:0905.

3331].

[3]ETMCollaboration,https://github.

com/etmc/tmLQCD.

[4]http://usqcd.

jlab.

org/usqcd-docs/chroma/.

[5]SeethepublicdeliverableD8.

3onthePRACEwebsiteunderPRACE-2IP.

[6]A.

Deuzeman,PoS(LATTICE2013).

[7]SeetheIntelDevelopermanual.

[8]A.

StathopoulosandK.

Orginos,Computinganddeatingeigenvalueswhilesolvingmultipleright-handsidelinearsystemswithanapplicationtoquantumchromodynamics,SIAMJ.

Sci.

Comput.

2010;32(1):439–462,[arXiv:0707.

0131].

[9]SeeforexamplethedocumentationoftheDDHMCcodebyM.

L¨uscher.

[10]TheHopperCrayXE6machineatNERSC.

[11]SeedocumentationforcombiningMPIandopenMPontheNERSCwebsite.

[12]R.

Frezzottietal.

[AlphaCollaboration],LatticeQCDwithachirallytwistedmassterm,JHEP0108,058(2001)[hep-lat/0101001].

[13]C.

Alexandrou,M.

Constantinou,S.

Dinter,V.

Drach,K.

Hadjiyiannakou,K.

Jansen,G.

KoutsouandA.

Vaquero,arXiv:1309.

7768[hep-lat].

[14]A.

Abdel-Rehim,C.

Alexandrou,M.

Constantinou,V.

Drach,K.

Hadjiyiannakou,K.

Jansen,G.

KoutsouandA.

Vaquero,arXiv:1310.

6339[hep-lat].

7

- casesandybridge相关文档

- E5sandybridge

- PenguinComputingClustersandybridge

- cumulativesandybridge

- 5.sandybridge

- 希捷sandybridge

- layerssandybridge

HostNamaste$24 /年,美国独立日VPS优惠/1核1G/30GB/1Gbps不限流量/可选达拉斯和纽约机房/免费Windows系统/

HostNamaste是一家成立于2016年3月的印度IDC商家,目前有美国洛杉矶、达拉斯、杰克逊维尔、法国鲁贝、俄罗斯莫斯科、印度孟买、加拿大魁北克机房。其中洛杉矶是Quadranet也就是我们常说的QN机房(也有CC机房,可发工单让客服改机房);达拉斯是ColoCrossing也就是我们常说的CC机房;杰克逊维尔和法国鲁贝是OVH的高防机房。采用主流的OpenVZ和KVM架构,支持ipv6,免...

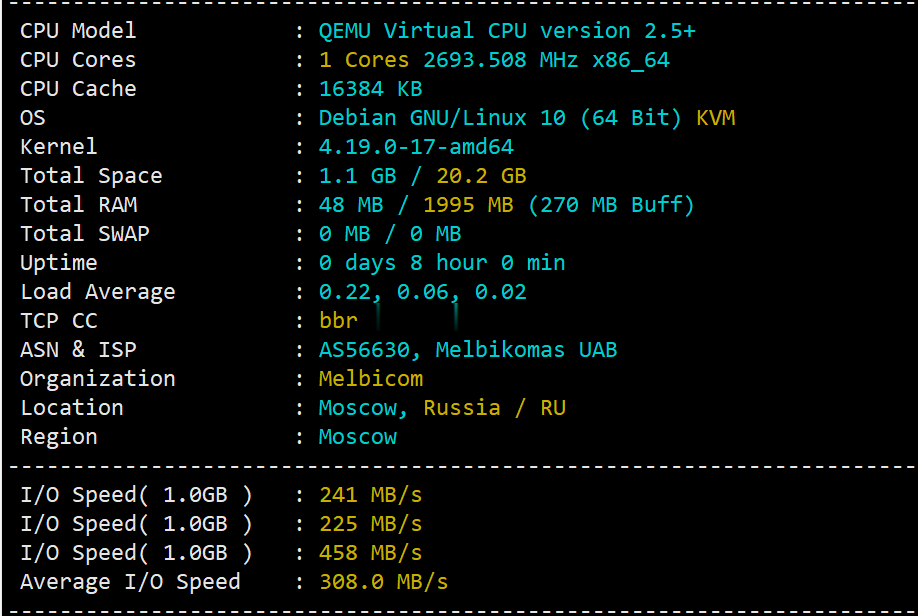

简单测评melbicom俄罗斯莫斯科数据中心的VPS,三网CN2回国,电信双程cn2

melbicom从2015年就开始运作了,在国内也是有一定的粉丝群,站长最早是从2017年开始介绍melbicom。上一次测评melbicom是在2018年,由于期间有不少人持续关注这个品牌,而且站长貌似也听说过路由什么的有变动的迹象。为此,今天重新对莫斯科数据中心的VPS进行一次简单测评,数据仅供参考。官方网站: https://melbicom.net比特币、信用卡、PayPal、支付宝、银联...

2021年国内/国外便宜VPS主机/云服务器商家推荐整理

2021年各大云服务商竞争尤为激烈,因为云服务商家的竞争我们可以选择更加便宜的VPS或云服务器,这样成本更低,选择空间更大。但是,如果我们是建站用途或者是稳定项目的,不要太过于追求便宜VPS或便宜云服务器,更需要追求稳定和服务。不同的商家有不同的特点,而且任何商家和线路不可能一直稳定,我们需要做的就是定期观察和数据定期备份。下面,请跟云服务器网(yuntue.com)小编来看一下2021年国内/国...

sandybridge为你推荐

-

游戏用户超6亿32游戏钱包是什么来的?网易网盘关闭入口网易网盘 怎么没有了psbc.com怎样登录wap.psbc.com月神谭求几个个性网名:haole018.comse.haole004.com为什么手机不能放?www.haole012.com012.qq.com是真的吗www.119mm.com看电影上什么网站??partnersonline电脑内一切浏览器无法打开广告法请问违反了广告法,罚款的标准是什么www.03024.comwww.sohu.com是什么